During that last several days I have been working on setting up a Processing sketch that can work with my Physical Computing media controller and serve as my mid-term project for the Introduction to Computational Media course. Long before arriving at ITP I have been interested in the design and development of media controllers. This project provided the opportunity for me to start some hands-on explorations.

In my previous post I already discussed the process for choosing the solution for playing and controlling our audio – we have decided to use Processing (and the Arduino) to control Ableton Live. Today I will provide an overview of how I developed the code for this application and some of the interface considerations associated to designing a software that could work across physical and screen-based interfaces.

My longer term objective is to create MIDI controllers using for audio and video applications using touchscreen and gestural interfaces. The interfaces that I am designing would ideally be evolved to work on multi-touch surfaces. In regards to my interest in gestural interaction, this I hope to explore through my current physical computing project and future projects.

Developing the Sketches

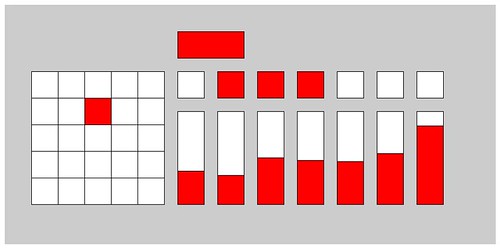

Since the physical computing project requires three basic types of controls that are the foundation of the media interface for my computational media mid-term, I decide to start with a focus on writing the code for these three basic elements. I set out to create code that could be easily re-used so that I could add additional elements with little effort. Here is a link to the sketch on openprocessing.org, where you can also view the full code for the controller pictured below (v1.0).

The process I used to create these sketches included the following steps: (1) creating the functionality associated to each element, separately; (2) creating a class for each element; (3) integrating objects of each class in Processing; (4) testing Processing with OSCulator and Ableton; (5) creating the Serial protocol to communicate the Arduino; (6) testing the sensors; (7) writing the final code for the Arduino; (8) testing Serial connection to Arduino; (9) calibration of the physical computing interface (whenever and wherever we set it up).

I have already made two posts on this subject (go to phase 1 post, go to phase 2 post), however, today I can attest that I have completed the vast majority of the work. The last processing sketch that I shared featured a mostly completed Matrix object that included functions for OSC communication. The serial communication protocol had also been defined.

The many additions to the sketch include creation of button and slider elements (each in its own class), a control panel (that holds the buttons and sliders), and a version of the application that features multiple button and sliders. The main updates to existing features include changes to Serial communication protocol to support additional sliders and matrices), and OSC communication code updates to ensure that messages are only sent when values change rather than continuously.

For the slider object I used the mouseDrag() function for the very first time. I had to debug my code for a while to get the visual slider to work properly. The button was easy to code from a visual perspective. The challenge I faced was in structuring the OSC messages so that I was able to send two separate and opposing messages for each click. The reason why this is important is that Ableton Live uses a separate buttons for starting and stopping clips. So I had to find a way to enable a single button to perform both functions.

The serial communication protocol update was easy to implement, so I will not delve into it here. To change the OSC communication protocol required a bit more work. I created a previous state variable in each object class to be enable verification of whether a change had occurred. The logic was implemented an “if” statement in the OSC message function.

Evolving the Controller

Here is an overview of my plans associated to this project: I plan to expand the current media controller with a few effect grids and the ability to select individual channels to apply effects. In order to do this I have to create new functions for the matrix class that enables me to set the X and Y matrix map values. I also want to work on improving the overall esthetics of the interface (while keeping its minimal feel).

From a sketch-architecture perspective I am considering creating a parent class for all buttons, grids and sliders. It would feature attributes and functionality that is common amongst all elements. Common attributes include location, size and color; common functionality requirements include detection of mouse location relative to object, OSC communication.

Questions for Class

Here is a question that came up during my development of this sketch (Dan, I need your help here). Can I use the translate, pop and pushMatrix commands to just to capture the current mouse location? This would be an easier solution to checking whether the mouse was hovering over an object.

Friday, October 30, 2009

Subscribe to:

Post Comments (Atom)

No comments:

Post a Comment